Building a Sports Event Scheduling System with AI

In recent days, I embarked on a transformative journey—using AI and the Vibe Coding approach to build a complete sports event scheduling system from scratch.

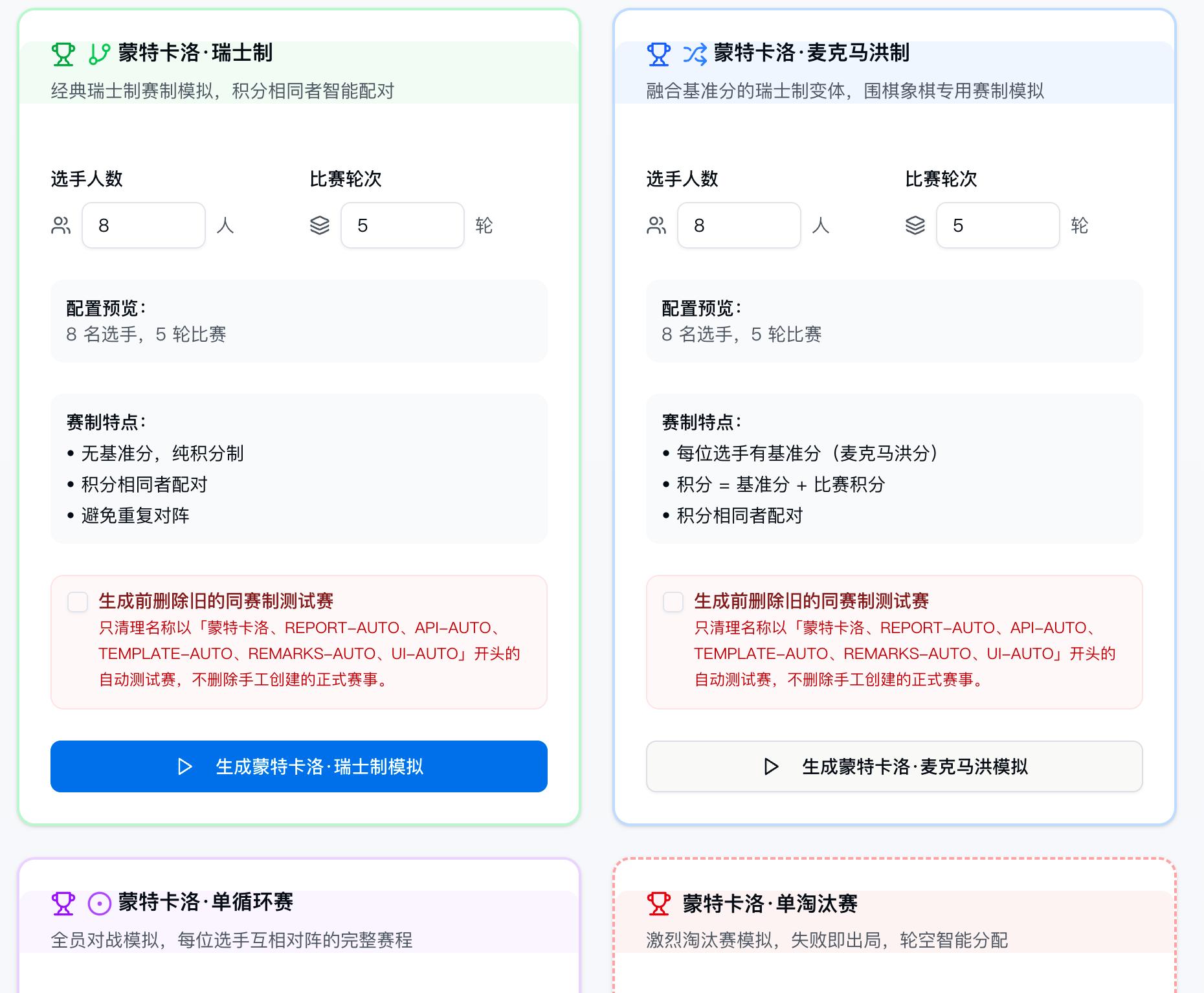

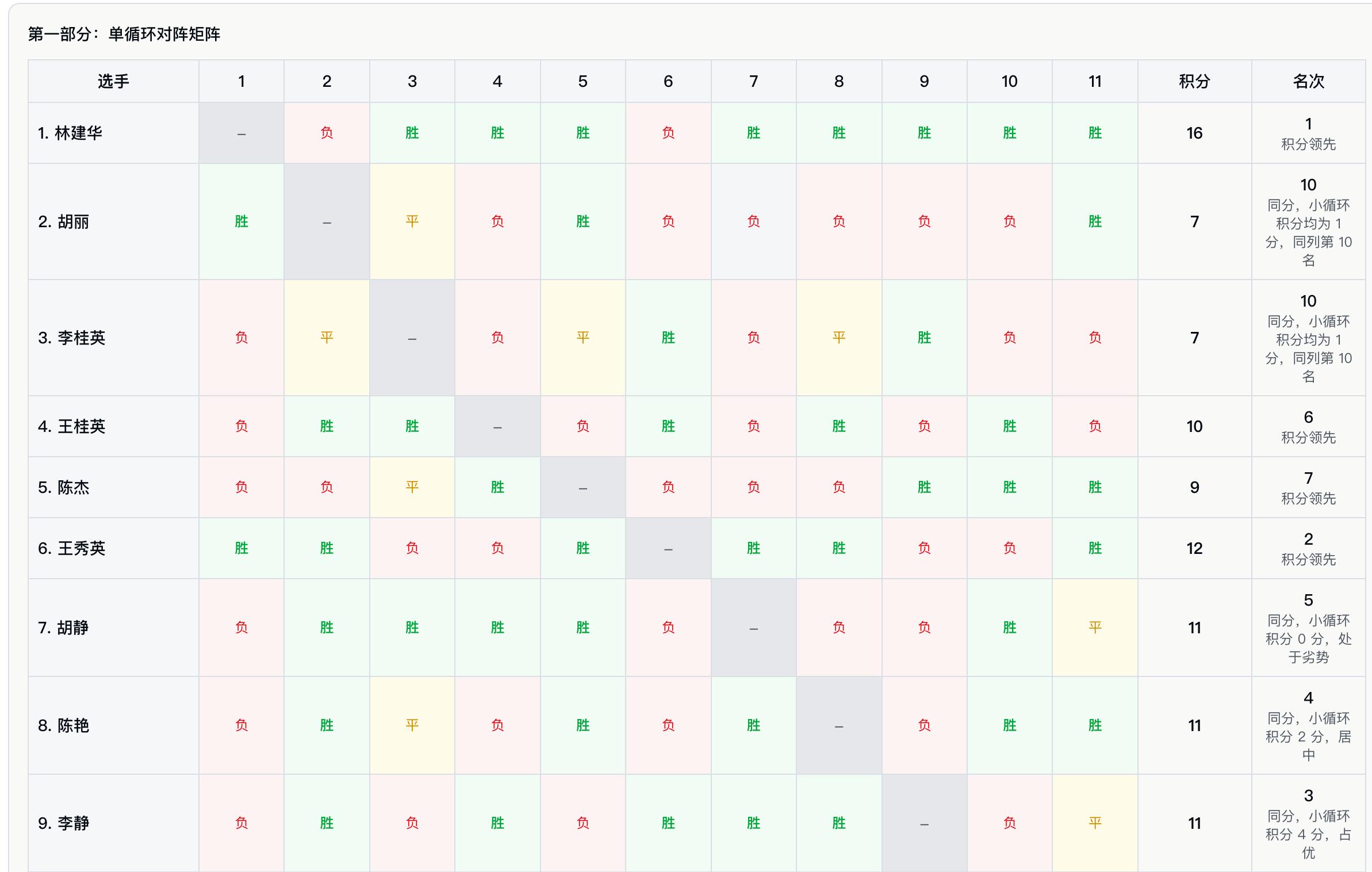

The system features seven tournament formats, player management, intelligent scheduling, score entry, and visual display, making it a project with high algorithm density and strong logical complexity.

After diving into the project, facing challenges, and reconstructing my approach, I compiled seven heartfelt insights to share with colleagues eager to embrace AI programming.

1. Get Hands-On to Earn Your Credibility

I firmly believe that everyone can be a product creator. However, if a mentor merely stands on the sidelines offering advice, it amounts to nothing more than empty rhetoric.

By personally developing the entire system with AI, I aimed not only to prove the feasibility of this approach but also to demonstrate that sports event scheduling presents a significant barrier: the professional logic is complex, and industry understanding is challenging. This tool serves as the best teaching medium and the strongest proof of concept.

One practical experience is worth a thousand words.

2. Use Simple Tools for Simple Projects, Codex for Complex Ones

In practice, the choice of model directly determines the project’s success. My path is clear and replicable:

- Simple Tools (Doubao System): For simple pages and basic process designs, the experience is astonishingly user-friendly for AI programming novices.

- As the project evolved from one tournament format to seven, with numerous testing modules and algorithmic logic, the simple tools began to falter, leading to frequent errors and context overload, nearly derailing the project.

I decisively migrated the project to CODEX + GPT-5.4 (upgrading to 5.5 later), which opened up new possibilities.

A harsh but true conclusion: In this high-complexity, multi-round reconstruction project, Codex + GPT-5.x proved significantly more stable in maintaining long context, understanding across files, logical consistency, and continuous iteration.

Choosing the right model for complex projects is crucial for success.

3. Testing First: The Lifeline of Algorithm Projects

The essence of this system is algorithm engineering. While the scheduling logic appears simple, human verification can easily lead to fatigue; coupled with the long chain of “player management → intelligent scheduling → score entry → result display,” manual testing becomes prohibitively expensive.

I made a key decision: build a testing module larger than the main project. For algorithm-intensive and rule-complex systems, the scale of testing assets may approach or even exceed the main functional code, which is normal and necessary.

- Algorithm Testing: Provides a parameter input panel to verify the accuracy of scheduling results with one click.

- Interface Testing: Utilizes CODEX’s built-in browser to automatically simulate clicks and run through the complete business flow.

This investment proved highly rewarding: most repetitive regression tests were covered by automation, allowing manual efforts to focus on critical paths and exceptional scenarios, resulting in exponential efficiency gains.

The testing module is not a cost but the core infrastructure of an algorithm project.

4. CODEX’s Click Simulation May Drift; Manual Oversight is Necessary

Even the strongest tools have their limitations.

CODEX’s interface automation testing has a known flaw: simulated mouse clicks can occasionally drift, sometimes clicking in the wrong position or even mistakenly hitting the delete button. This issue cannot be completely eliminated. When using Codex for interface automation validation, risks associated with coordinate-based clicks need to be managed, as occasional misclicks must be accounted for. A more stable approach is to use semantic selectors, stable positioning, confirmation pop-ups, and protection for dangerous operations.

5. Transform Individual AI Skills into Organizational Capabilities

While Code Agent is highly effective for individual tasks, if it remains solely in personal hands, it becomes a “lone hero” and cannot scale or replicate within an organization.

As a manager, it is essential to establish organizational-level standards so that AI capabilities can be preserved, reused, and managed:

- System Framework Standards: Uniform architectural agreements to avoid disarray.

- Process Documentation Standards: Clear delivery standards to ensure output quality.

When developing Token applications, the unified standard for TEXT / WEB SEARCH / PIC API KEYS is also a core manifestation of organizational strength. An efficient organization must implement a unified API Key governance system, managing by project, environment, permissions, and quotas, rather than allowing chaos with each person using their own keys.

Standards cannot rely solely on chat context. More mature organizational practices involve embedding standards into:

- Project README;

- Development specification documents;

- Testing specifications;

- PR templates;

- Agent instruction files;

- Code review checklists;

- Automated testing and CI;

- Acceptance criteria.

Standards must not only inform Codex but also be ingrained in repositories, processes, and tests, ensuring that both AI and humans operate under the same constraints.

Upgrading AI capabilities from “personal exclusive” to “organizational foundation” is the only sustainable and scalable approach.

6. Treat AI as a Collaborator, Not a Tool—This is the Most Critical Cognitive Leap

My biggest realization this week can be summed up in one sentence:

Don’t treat AI as a laborer; treat it as a colleague and co-creator.

My approach has never been to issue a bunch of detailed commands but rather to present problems for discussion, brainstorming, and decision-making together.

This shift in mindset immediately unleashed Codex’s remarkable creativity, often exceeding my expectations.

Here’s a real example:

Swiss System Stress Test—389 players, 15 rounds of matches, originally took about 150 seconds to optimize.

Instead of commandingly saying, “optimize speed,” I openly presented the goals, bottlenecks, and concerns, asking it to provide a complete thought process for our joint evaluation.

The final result: scheduling time reduced to under 3 seconds, performance improved two orders of magnitude, and business logic remained flawless.

If you treat it as a tool, it will only execute; If you treat it as a colleague, it can co-create miracles.

7. General Abstraction Requires Active Guidance; Humans Define Problems

Launching functionality is just the first step.

Continuously abstracting business logic into general modules can be assisted by Codex, but the direction of abstraction and value judgment must remain the responsibility of humans. Humans must actively remind, reinforce their roles, and solidify long-term memory. AI can suggest abstractions and restructuring, but it typically won’t reliably and consistently take on the responsibility for evolving product architecture. Whether the direction of abstraction is worthwhile, whether it is over-designed, and whether it aligns with long-term business goals still requires human judgment.

Humans are responsible for defining problems, judging value, setting boundaries, and bearing responsibility; AI is responsible for expanding solutions, accelerating execution, assisting validation, and continuous iteration.

Conclusion

The essence of Vibe Coding has never been about “using AI to write code” but rather about treating AI as a true colleague—a knowledgeable, highly capable, and always available co-creator.

Align your needs with AI just as you would with a product manager; Discuss architecture with AI as you would with an IT manager; Confirm quality with AI as you would with a testing manager.

When you truly treat it like a “person,” the productivity and creativity it returns will exceed your imagination.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.