OpenAI Releases GPT-5.2 Codex

On December 19, 2025, OpenAI officially launched its next-generation coding model, GPT-5.2 Codex, along with a technical blog detailing the model’s positioning, capability improvements, and deployment methods.

Key Features of GPT-5.2 Codex

GPT-5.2 Codex is built on the general model GPT-5.2 and is specifically optimized for “Agentic Coding” scenarios, focusing on complex software engineering tasks. Compared to previous versions, the new model has systematic improvements in long-term task execution, large-scale code changes, Windows native environment support, and capabilities related to cybersecurity.

OpenAI states that GPT-5.2 Codex introduces a native context compaction mechanism, enhancing its understanding and utilization efficiency of extremely long contexts, which allows for more stable performance in long-term coding tasks across files and modules. Additionally, the model’s reliability and consistency have improved in scenarios involving large-scale changes like code refactoring and migration.

Security capabilities are also a focal point of this update. OpenAI mentioned that as the model’s reasoning and tool invocation abilities have strengthened, its applicability in cybersecurity has also increased. Last week, a security researcher used GPT-5.1-Codex-Max in conjunction with Codex CLI to help identify three security vulnerabilities in the React framework, which were responsibly disclosed and could lead to denial of service or source code leakage risks.

OpenAI claims that GPT-5.2 Codex is currently its strongest Codex model in terms of cybersecurity capabilities, although these capabilities still possess a “dual-use” nature. According to OpenAI’s Preparedness Framework assessment, the model has not yet been classified as having “high-level” cybersecurity capability, but the company has proactively considered potential risks associated with future capability growth in its deployment strategy.

Deployment Strategy

OpenAI has opted to provide GPT-5.2 Codex through controlled channels initially. The model is now available in Codex CLI, IDE extensions, cloud environments, and code review processes, and is open to all paid ChatGPT users starting today. Meanwhile, OpenAI is advancing a secure open API solution to prepare for future third-party access.

For cybersecurity-related use cases, OpenAI has also launched an invite-only pilot program, granting limited access to vetted security researchers and organizations. This mechanism aims to support authorized defensive security research while maintaining control over the model’s usage scope and risks.

Performance Evaluation

How does GPT-5.2 Codex perform? In terms of capability integration, GPT-5.2 Codex inherits the characteristics of GPT-5.2 in professional reasoning and factual accuracy while integrating the capabilities of GPT-5.1-Codex-Max in agentic coding and terminal operations. OpenAI states that this combination enables the model to call tools more stably in complex engineering tasks, understand multimodal inputs, and complete long-term reasoning while controlling token usage efficiency.

The new model also shows higher understanding accuracy when processing shared screenshots, technical diagrams, data charts, and user interfaces during coding. In the Windows native environment, the efficiency and reliability of agent execution with GPT-5.2 Codex have improved.

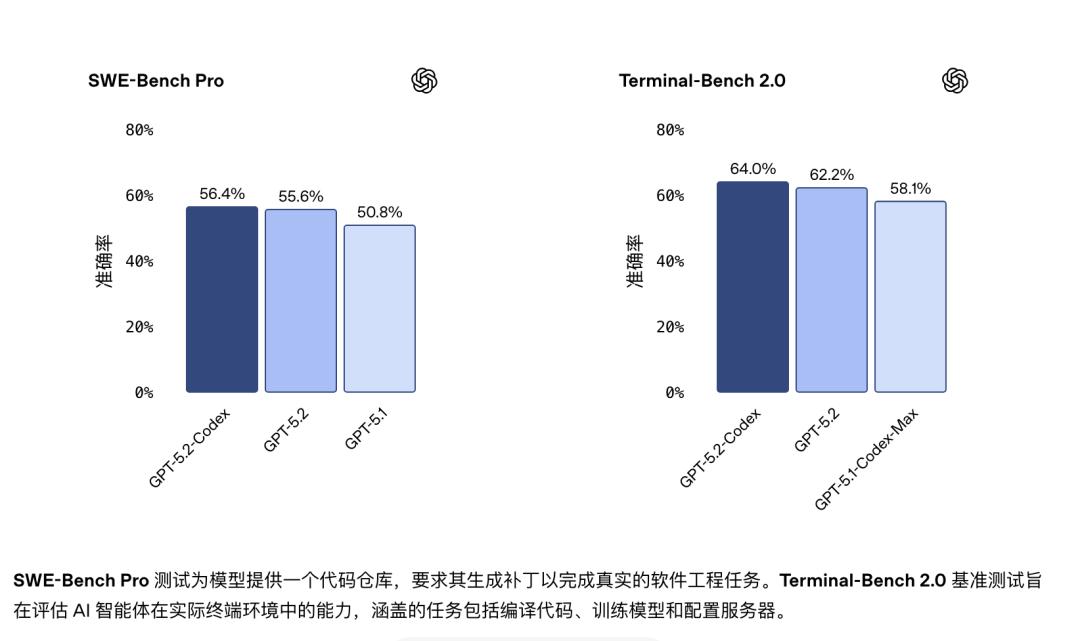

In benchmark tests, GPT-5.2 Codex participated in SWE-Bench Pro and Terminal-Bench 2.0 evaluations, which assess the model’s ability to perform actual engineering tasks in real codebases and terminal environments. OpenAI reports that the results indicate overall performance improvements in these scenarios compared to previous versions.

OpenAI’s cybersecurity assessment shows a continuous enhancement in model capabilities from GPT-5-Codex to GPT-5.1-Codex-Max and now to GPT-5.2-Codex. The company anticipates that future AI models will continue along this development trajectory.

User Feedback

The release of GPT-5.2 Codex has sparked discussions across various platforms. On Reddit, some users noted that compared to the previous GPT-5.2, the new model offers about a 10% improvement in coding capabilities. Running the model on higher configurations, users find it stable, predictable, and reliable, providing detailed explanations of reasoning and operational steps.

However, they also pointed out that this model consumes more tokens during operation, leading to potential cost pressures for individual users, amateur developers, or small businesses. For medium-sized enterprises dealing with highly complex, time-sensitive software engineering issues, the model’s value becomes evident, even to the point of being “appreciated”.

Another deep user of the model agreed, stating:

“I’ve been using the model since its release, primarily running it on mid to high configurations. In my use case, the new version shows significant improvement over GPT-5.1 Codex. I’m writing complex signal processing code and using the model for online retrieval to ensure outputs are based on real data and existing research. Overall, the performance is excellent. Although I haven’t systematically compared this version with the high or ultra-high configurations of GPT-5.2, the latter consumes a lot of tokens and runs slower. In contrast, the version I am currently using strikes a better balance in speed and cost control.”

Users have expressed amazement at OpenAI’s rapid product iterations, noting that even at FAANG-level companies, it typically takes months or longer to achieve such directional shifts. One user remarked:

“Anyone who has worked in large organizations knows that such directional shifts, even at FAANG-level companies, usually take months or longer to accomplish. In contrast, after the release of ChatGPT, Google took nearly two years to catch up significantly in technology, which is quite exaggerated—after all, the Transformer architecture was proposed by them. By comparison, OpenAI has quickly narrowed the gap in just a few months.”

OpenAI’s Funding Plans

As GPT-5.2 Codex is released, OpenAI is reportedly initiating a new round of funding, aiming to raise up to $100 billion. According to sources cited by The Wall Street Journal, the funds will support its long-term strategy for continued expansion in the AI field.

If this round of funding is successful, OpenAI’s overall valuation could rise to approximately $830 billion. Reports indicate that this funding round is still in its early stages, with transaction structures and terms yet to be finalized, leaving room for adjustments. Sources say OpenAI hopes to complete this funding round by the end of Q1 next year, but the timeline depends on market conditions and investor feedback.

If completed as planned, this would be the largest fundraising effort since OpenAI’s inception and one of the most significant capital operations among private tech companies globally. However, there remains uncertainty about whether the market has sufficient investor demand to absorb such a large volume of funding.

In the context of a cautious public market regarding AI-related spending, this funding round is seen as a crucial test for OpenAI’s fundraising capabilities and long-term strategy. Recent discussions about a potential bubble in the AI industry have put pressure on the stock performance of several related tech companies. Nevertheless, for OpenAI, maintaining capital investments for model training, computational infrastructure, and product iteration remains high.

OpenAI CEO Sam Altman has been actively engaging potential investors globally in recent years to establish a more robust capital pool. The Wall Street Journal previously reported that OpenAI is also weighing the possibility of an initial public offering (IPO) in the future. Sources indicate that in an environment of rapid model capability evolution and increasing competition, OpenAI’s funding needs have far exceeded those of traditional tech startups.

In this funding plan, SoftBank Group is seen as a key investor, reportedly agreeing to invest approximately $30 billion in OpenAI. To support this investment commitment, SoftBank sold about $5.8 billion worth of NVIDIA shares last month. According to current plans, OpenAI expects to receive the remaining approximately $22.5 billion from SoftBank by the end of this year.

In addition to SoftBank, OpenAI has been actively pursuing multiple transactions. Reports indicate that the company completed a content licensing agreement by the end of the year and secured a $1 billion investment from Disney. Sources suggest that given the massive scale of this funding round, OpenAI expects to attract sovereign wealth funds as significant investors. Previously, the company has received funding support from the UAE investment firm MGX.

These transactions demonstrate that even in a tightening overall funding environment, OpenAI still possesses strong capital attraction, but the sustainability of funding for its long-term expansion plans remains a concern.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.